program areas Digital Youth

Program Areas

-

For Immediate ReleaseWashington, DCContact: Katharina Kopp, kkopp@democraticmedia.org ###Senate Advances COPPA 2.0 to Curb Targeted Advertising to Young People The Center for Digital Democracy’s Director of Policy, Katharina Kopp, Ph.D., released the following statement today:“We commend the Senate for passing COPPA 2.0 and taking an important step to address the commercial surveillance and targeted advertising practices that dominate today’s digital marketplace. With this legislation, the Senate would extend meaningful privacy protections to teenagers and place clear limits on the ability of online platforms to collect and use young people’s personal data for advertising and marketing purposes. These protections recognize that children and teenagers deserve to participate online without being subjected to constant surveillance, behavioral tracking, and exploitation.“The legislation preserves the ability of states to enact and enforce stronger protections for children and teens, ensuring that states can continue to lead in addressing emerging risks in the digital marketplace.“We thank Senators Markey and Cassidy for their leadership in advancing this legislation. We urge the House to move forward with a strong version of COPPA 2.0 that maintains robust protections for young people online.” ###

-

Press Release

Advocates Urge FTC to Halt Meta’s Plan to Use AI Chatbot Data for Ads

Meta’s chatbot data grab risks normalizing surveillance-driven marketing across the industry, setting a dangerous precedent for privacy and consumer protection.

Contact: Jeff Chester, 202-494-7100 Jeff@democraticmedia.org John Davisson, 202-483-1140 davisson@epic.orgAdvocates Urge FTC to Halt Meta’s Plan to Use AI Chatbot Data for Ads Meta’s chatbot data grab risks normalizing surveillance-driven marketing across the industry, setting a dangerous precedent for privacy and consumer protection.Washington, D.C. A coalition of 36 privacy, consumer protection, children’s rights, and civil rights advocates and researchers today called on the Federal Trade Commission (FTC) to investigate and halt Meta’s recently announced plan to use conversations with its AI chatbots for advertising and content personalization. The letter, sent to FTC Chair Andrew Ferguson and Commissioners, urges the agency to exercise its oversight authority and act under both Meta’s existing consent decree and Section 5 of the FTC Act to stop this practice from moving forward.On October 1, 2025, Meta announced that beginning December 16 it would use chatbot interactions on Facebook, Instagram, and WhatsApp to inform ad targeting and personalization. These conversations often contain highly sensitive disclosures - including health, relationship, and mental health information - yet Meta has provided no opt-in consent mechanism and no assurances of heightened privacy or security safeguards.In the letter, the coalition calls on the FTC to:Enforce Meta’s existing consent decrees and require disclosure of risk assessments;Treat the practice as an unfair and deceptive act under Section 5 of the FTC Act;Suspend Meta’s chatbot advertising program pending Commission review;Finalize the long-pending modifications to the 2020 order to strengthen privacy protections, including a proposed prohibition to monetize minors’ data.The groups are also urging the Commission to disclose its findings publicly.The coalition emphasizes that Meta’s initiative is not a marginal product feature but part of a deliberate strategy to expand surveillance-driven marketing. Without FTC intervention, they warn, Meta’s actions will normalize invasive AI data practices across the industry, further undermining consumer privacy and protection.Quotes“The FTC cannot stand by while Meta and its peers rewrite the rules of privacy and consumer protection in the AI era. Chatbot surveillance for ad targeting is not a distant threat—it is happening now. Meta’s move will accelerate a race in which other companies are already implementing similarly invasive and manipulative practices, embedding commercial surveillance deeper into every aspect of our lives.” Katharina Kopp, Deputy Director, Center for Digital Democracy (CDD)“The FTC has a sordid history of letting Meta off the hook, and this is where it’s gotten us: industrial-scale privacy abuses brought to you by a chatbot that pretends to be your friend,” said John Davisson, Director of Litigation for EPIC. “And where is the Trump-Ferguson FTC? Slow-walking a critical enforcement action brought under Chair Khan to protect minors from Meta’s exploitative data practices. Meta’s appalling chatbot scheme should be a wake-up call to the Commission. It’s time to get serious about reining in Meta.” SignatoriesThe 36 organizations that signed to the letter include, among others: Center for Digital Democracy; Electronic Privacy Information Center; Public Citizen; Demand Progress Education Fund; ParentsTogether Action; Becca Schmill Foundation; Center for Economic Integrity; Fairplay; National Association of Consumer Advocates; Consumer Federation of America; 5Rights Foundation; Mothers Against Media Addiction (MAMA); and Common Sense Media. * * *The Center for Digital Democracy is a Washington, D.C.-based public interest research and advocacy organization, working on behalf of citizens, consumers, communities, and youth to protect and expand privacy, digital rights, and data justice.The Electronic Privacy Information Center (EPIC) is a is a 501(c)(3) non-profit established in 1994 to protect privacy, freedom of expression, and democratic values in the information age through advocacy, research, and litigation. For more than 30 years, EPIC has fought for robust safeguards to protect personal information. -

Press Release

CDD Joins Coalition of Child Advocates Urging Senate E&C Committee Members to Advance COPPA 2.0

Letter

June 23, 2025Dear Chairman Cruz, Ranking Member Cantwell, and Members of the Committee,Today we write as a coalition of advocates dedicated to the mental and physical health, privacy, safety, and education of our nation’s youth, urging you to advance the Children and Teens’ Online Privacy Protection Act (S. 836), also known as COPPA 2.0. The children’s data privacy protection law is long outdated. Now, with new and even more powerful technologies, like AI, proliferating, and unscrupulous companies collecting ever more personal information from young people, it is more imperative than ever that Congress prioritize privacy protections for children and teens by updating the decades-old Children’s Online Privacy Protection Act (COPPA) and passing COPPA 2.0 out of your Committee.COPPA 2.0 is an effective, widely supported, bipartisan update to its 25-year-old predecessor. And the Senate overwhelmingly approved this bill once already, by a vote of 91-3 in July 2024 as part of the Kids Online Safety and Privacy Act.We appreciate the recent rule-making efforts of the FTC to update COPPA. However, certain updates–such as adding protections for teenagers 13 and over–can only be made by Congress, and thus COPPA 2.0 is still desperately needed. COPPA 2.0 extends privacy protections to teens, implements strong data minimization principles, bans targeted advertising to covered minors, gives families greater control over their data, and strengthens the law to ensure covered entities cannot evade enforcement. American families urgently need these protections. Big Tech’s business model relies on the extraction of millions of data points on children and teens, all to rake in record profi ts through design features that maximize engagement and models that interpret youth’s emotional states in order to make more money off of highly targeted ads.The use of targeted advertising results in kids being shown ads for alcohol, tobacco, diet pills, and gambling sites–and there is a growing understanding that platforms use highly detailed information about young users to target them with these inappropriate ads at the moment they are feeling insecure or emotionally vulnerable; precisely the moment at which they are most susceptible.These privacy risks and harms are multiplied because social media platforms are designed to be addictive, so that kids will spend more time online–giving companies more opportunities to take out more information about young users, so they can even better target kids and their familieswith marketing messages, and target kids more often. As the former U.S. Surgeon General has advised, this misuse of young people’s personal information has contributed to a startling mental health crisis among our youth, along with a myriad of online harms, including sexual exploitation, rampant cyberbullying, and eating disorders. The data-driven business model, which is being exacerbated by AI, is directly at odds with the health, safety, and privacy of our nation’s children and teens, and Congress must act to put new safeguards in place.COPPA 2.0 is a critical piece of the puzzle to protect children and teens. America’s youth and families cannot wait any longer. With our utmost respect, we ask that you move COPPA 2.0 forward.Sincerely,American Academy of PediatricsCenter for Digital DemocracyCommon Sense MediaFairplay -

Press Release

Statement on the Reintroduction of the Children and Teens’ Online Privacy Protection Act (COPPA 2.0) by Senators Markey and Cassidy

March 4, 2025Center for Digital DemocracyWashington, DCContact: Katharina Kopp, kkopp@democraticmedia.orgStatement on the Reintroduction of the Children and Teens’ Online Privacy Protection Act (COPPA 2.0) by Senators Markey and Cassidy. The following statement is attributed to Katharina Kopp, Ph.D., Deputy Director of the Center for Digital Democracy:“The Children and Teens’ Online Privacy Protection Act, reintroduced by Senators Markey and Cassidy and other Senate co-sponsors, is more urgent than ever. Children’s surveillance has only intensified across social media, gaming, and virtual spaces, where companies harvest data to track, profile, and manipulate young users. COPPA 2.0 will ban targeted ads to those under 16, curbing the exploitation, manipulation, and discrimination of children for profit. By extending protections to teens and requiring a simple ‘eraser button’ to delete personal data, this legislation takes a critical step in restoring privacy rights in an increasingly invasive digital world,” said Katharina Kopp, Deputy Director of the Center for Digital Democracy. See also the full statement from Senators Markey and Cassidy here. -

Regulating Digital Food and Beverage Marketing in the Artificial Intelligence & Surveillance Advertising Era Ultra-processed food companies and their retail, online-platform, quick-service-restaurant, media-network and advertising-technology (adtech) partners are expanding their targeting operations to push the consumption of unhealthy foods and beverages. A powerful array of personalized, data-driven and AI-generated digital food marketing is pervasive online, and also designed to influence us offline as well (such as when we are at convenience or grocery stores). CDD has a number of reports that reveal the extent of this development, including an analysis of the market, ways to research, and where policies and safeguards have been enacted. Unfortunately, there isn’t a single remedy to address such unhealthy marketing. Individuals and families can only do so much to reduce the effects of today’s pervasive tracking and targeting of people and communities via mobile phones, social media, and “smart” TVs. What’s required now is a coordinated set of policies and regulations to govern the ways ultra processed food companies and their allies conduct online advertising and data collection, especially when public health is involved. Such an effort, moreover, must be broad-based, addressing a variety of sectors, such as privacy, consumer protection, and antitrust. Formulating and advancing these policies will be an enormous challenge, but it is one that we cannot afford to ignore. CDD is working to address all of these issues and more. We closely follow the digital marketplace, especially from the food, beverage, retail and online-platform industries. We track, analyze and call attention to harmful industry practices, and are helping to build a stronger global movement of advocates dedicated to protecting all of us from this unfair and currently out-of-control system. We are happy to work with you to ensure everyone—in the U.S. and worldwide—can live healthier lives without being constantly exposed to fast-food and other harmful marketing designed to increase corporate bottom lines without regard to the human and environmental consequences.

-

Press Release

Statement on the Federal Trade Commission’s Amendments to the Children’s Online Privacy Protection Rule

January 16, 2025Center for Digital DemocracyWashington, DCContact: Katharina Kopp, kkopp@democraticmedia.org Statement on the Federal Trade Commission’s Amendments to the Children’s Online Privacy Protection Rule The following statement is attributed to Katharina Kopp, Ph.D., Deputy Director of the Center for Digital Democracy:As digital media becomes increasingly embedded in children’s lives, it more aggressively surveils, manipulates, discriminates, exploits, and harms them. Families and caregivers know all too well the impossible task of keeping children safe online. Strong privacy protections are critical to ensuring their well-being and safety. The Federal Trade Commission’s (FTC) finalized amendments to the Children’s Online Privacy Protection Rule (COPPA Rule) are a crucial step forward, enhancing safeguards and shifting the responsibility for privacy protections from parents to service providers. Key updates include:Restrictions on hyper-personalized data collection for targeted advertising:Mandating separate parental consent for disclosing personal information to third parties.Prohibiting the conditioning of service access on such consent.Limits on data retention:Imposing stricter data retention limits.Baseline and default privacy protections:Strengthening purpose specification and disclosure requirements.Enhanced data security requirements:Requiring robust information security programs.We commend the FTC, under Chair Lina Khan’s leadership, for finalizing this much-needed update to the COPPA Rule. The last revision, in 2013, was over a decade ago. Since then, the digital landscape has been radically transformed by practices such as mass data collection, AI-driven systems, cloud-based interconnected platforms, sentiment and facial analytics, cross-platform tracking, and manipulative, addictive design practices. These largely unregulated, Big Tech and investor driven transformations have created a hyper-surveillance environment that is especially harmful and toxic to children and teens.The data-driven, targeted advertising business model continues to pose daily threats to the health, safety, and privacy of children and their families. The FTC’s updated rule is a small but significant step toward addressing these risks, curbing harmful practices by Big Tech, and strengthening privacy protections for America’s youth online.To ensure comprehensive safeguards for children and teens in the digital world, it is essential that the incoming FTC leadership enforces the updated COPPA Rule vigorously and without delay. Additionally, it is imperative that Congress enacts further privacy protections and establishes prohibitions against harmful applications of AI technologies. * * *In 2024, a coalition of eleven leading health, privacy, consumer protection, and child rights groups filed comments at the Federal Trade Commission (FTC) offering a digital roadmap for stronger safeguards while also supporting many of the agency’s key proposals for updating its regulations implementing the bipartisan Children’s Online Privacy Protection Act (COPPA). Comments were submitted by Fairplay, the Center for Digital Democracy, the American Academy of Pediatrics, and other advocacy groups. The Center for Digital Democracy is a public interest research and advocacy organization, established in 2001, which works on behalf of citizens, consumers, communities, and youth to protect and expand privacy, digital rights, and data justice. CDD’s predecessor, the Center for Media Education, lead the campaign for the passage of COPPA over 25 years ago in 1998. -

Press Release

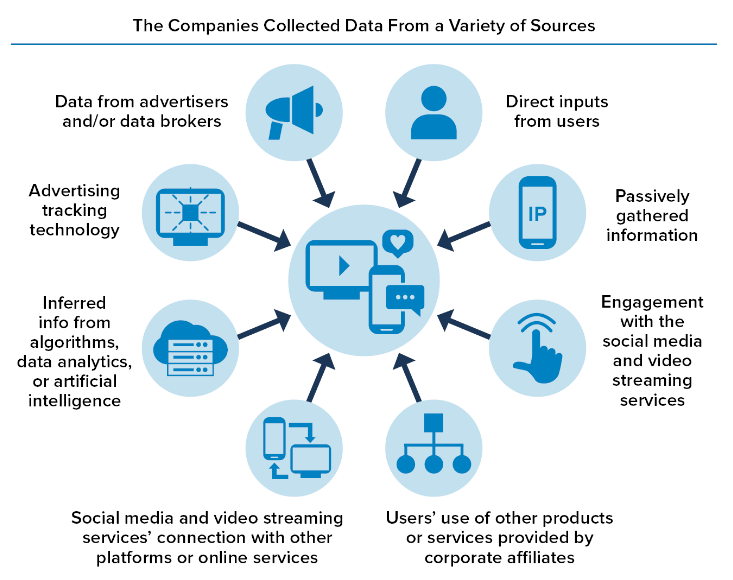

Statement Regarding the FTC 6(b) Study on Data Practices of Social Media and Video Streaming Services

“A Look Behind the Screens Examining the Data Practices of Social Media and Video Streaming Services”

Center for Digital DemocracyWashington, DCContact: Katharina Kopp, kkopp@democraticmedia.org Statement Regarding the FTC 6(b) Study on Data Practices of Social Media and Video Streaming Services -“A Look Behind the Screens Examining the Data Practices of Social Media and Video Streaming Services”The following statement can be attributed to Katharina Kopp, Ph.D., Deputy Director,Center for Digital Democracy:The Center for Digital Democracy welcomes the release of the FTC’s 6(b) study on social media and video streaming providers’ data practices and it evidence-based recommendations.In 2019, Fairplay (then the Campaign for a Commercial-Free Childhood (CCFC)), the Center for Digital Democracy (CDD), and 27 other organizations, and their attorneys at Georgetown Law’s Institute for Public Representation urged the Commission to use its 6(b) authority to better understand how tech companies collect and use data from children.The report’s findings show that social media and video streaming providers’ s business model produces an insatiable hunger for data about people. These companies create a vast surveillance apparatus sweeping up personal data and creating an inescapable matrix of AI applications. These data practices lead to numerous well-documented harms, particularly for children and teens. These harms include manipulation and exploitation, loss of autonomy, discrimination, hate speech and disinformation, the undermining of democratic institutions, and most importantly, the pervasive mental health crisis among the youth.The FTC's call for comprehensive privacy legislation is crucial in curbing the harmful business model of Big Tech. We support the FTC’s recommendation to better protect teens but call, specifically, for a ban on targeted advertising to do so. We strongly agree with the FTC that companies should be prohibited from exploiting young people's personal information, weaponizing AI and algorithms against them, and using their data to foster addiction to streaming videos.That is why we urge this Congress to pass COPPA 2.0 and KOSA which will compel Big Tech companies to acknowledge the presence of children and teenagers on their platforms and uphold accountability. The responsibility for rectifying the flaws in their data-driven business model rests with Big Tech, and we express our appreciation to the FTC for highlighting this important fact. ________________The Center for Digital Democracy is a public interest research and advocacy organization, established in 2001, which works on behalf of citizens, consumers, communities, and youth to protect and expand privacy, digital rights, and data justice. CDD’s predecessor, the Center for Media Education, lead the campaign for the passage of COPPA over 25 years ago in 1998. -

Press Release

Statement Regarding the U.S. Senate Vote to Advance the Kids Online Safety and Privacy Act (S. 2073)

Center for Digital Democracy Washington, DC July 30, 2024 Contact: Katharina Kopp, kkopp@democraticmedia.org Statement Regarding the U.S. Senate Vote to Advance the Kids Online Safety and Privacy Act (S. 2073). The following statement can be attributed to Katharina Kopp, Ph.D., Deputy Director,Center for Digital Democracy: Today is a milestone in the decades long effort to protect America’s young people from the harmful impacts caused by the out-of-control and unregulated social and digital media business model. The Kids Online Safety and Privacy Act (KOSPA), if passed by the House and enacted into law, will safeguard children and teens from digital marketers who manipulate and employ unfair data-driven marketing tactics to closely surveil, profile, and target young people. These include an array of ever-expanding tactics that are discriminatory and unfair. The new law would protect children and teens being exposed to addictive algorithms and other harmful practices across online platforms, protecting the mental health and well-being of youth and their families. Building on the foundation of the 25-year-old Children's Online Privacy Protection Act (COPPA), KOSPA will provide protections to teens under 17. It will prohibit targeted advertising to children and teens, impose data minimization requirements, and compel companies to acknowledge the presence of young online users on their platforms and apps. The safety by design provisions will necessitate the disabling of addictive product features and the option for users to opt out of addictive algorithmic recommender systems. Companies will be obliged to prevent and mitigate harms to children, such as bullying and violence, promotion of suicide, eating disorders, and sexual exploitation. Crucially, most of these safeguards will be automatically implemented, relieving children, teens, and their families of the burden to take further action. The responsibility to rectify the worst aspects of their data-driven business model will lie squarely with Big Tech, as it should have all along. We express our gratitude for the leadership and support of Majority Leader Schumer, Chairwoman Cantwell, Ranking Member Cruz, and Senators Blumenthal, Blackburn, Markey, Cassidy, along with their staff, for making this historic moment possible in safeguarding children and teens online. Above all, we are thankful to all the parents and families who have tirelessly advocated for common-sense safeguards. We now urge the House of Representatives to act upon their return from the August recess. The time for hesitation is over. About the Center for Digital Democracy (CDD)The Center for Digital Democracy is a public interest research and advocacyorganization, established in 2001, which works on behalf of citizens, consumers, communities, and youth to protect and expand privacy, digital rights, and data justice. CDD’s predecessor, the Center for Media Education, lead the campaign for the passage of COPPA over 25 years ago, in 1998.