Publishings Digital Citizen

Program Areas

-

Blog

CDD Welcomes FCC Chairman Wheeler’s Broadband Privacy Proposal

Provides Key New Safeguards for ISP Customers’ Privacy

Washington, DC: Federal Communications Commission Chairman Tom Wheeler announced today (link is external) that he is circulating a broadband ISP privacy proposal to the other four FCC Commissioners detailing a plan that would provide individuals with key safeguards regarding their data. The proposal is designed to help implement the FCC “Open Internet” order to ensure that ISPs respect the privacy of communications over broadband and mobile networks. The following can be attributed to Katharina Kopp, Deputy Director, Center for Digital Democracy: We laud the timely development of a rule that would require ISP customer permission before much of their personal information may be used or shared. This proposal offers consumers the much needed safeguards and desired control over their own personal information. For the first time, ISPs would have to obtain customer consent for the use of web browsing and app usage history for advertising purposes. Given the unique position of ISPs as gatekeepers to vast amounts of customer data, the FCC’s proposed broadband privacy rule is a critical step in preserving a free and open Internet into the 21st century. Because we know that ISPs’ big data analytical capabilities can turn seemingly non-sensitive information into highly private information about our lives, and because all our browsing data and the content of our communications is incredibly sensitive to begin with, we had asked the FCC to avoid drawing distinctions between “sensitive” and “non-sensitive” categories of information. Still, we believe that the proposal’s framework can work for consumer privacy provided the FCC’s definition of “sensitive” is robust and meaningful. We will work to ensure this proposal is effectively implemented and that ISP broadband consumers receive the privacy protections they deserve. The Center for Digital Democracy is a leading nonprofit organization focused on empowering and protecting the rights of the public in the digital era. -

Blog

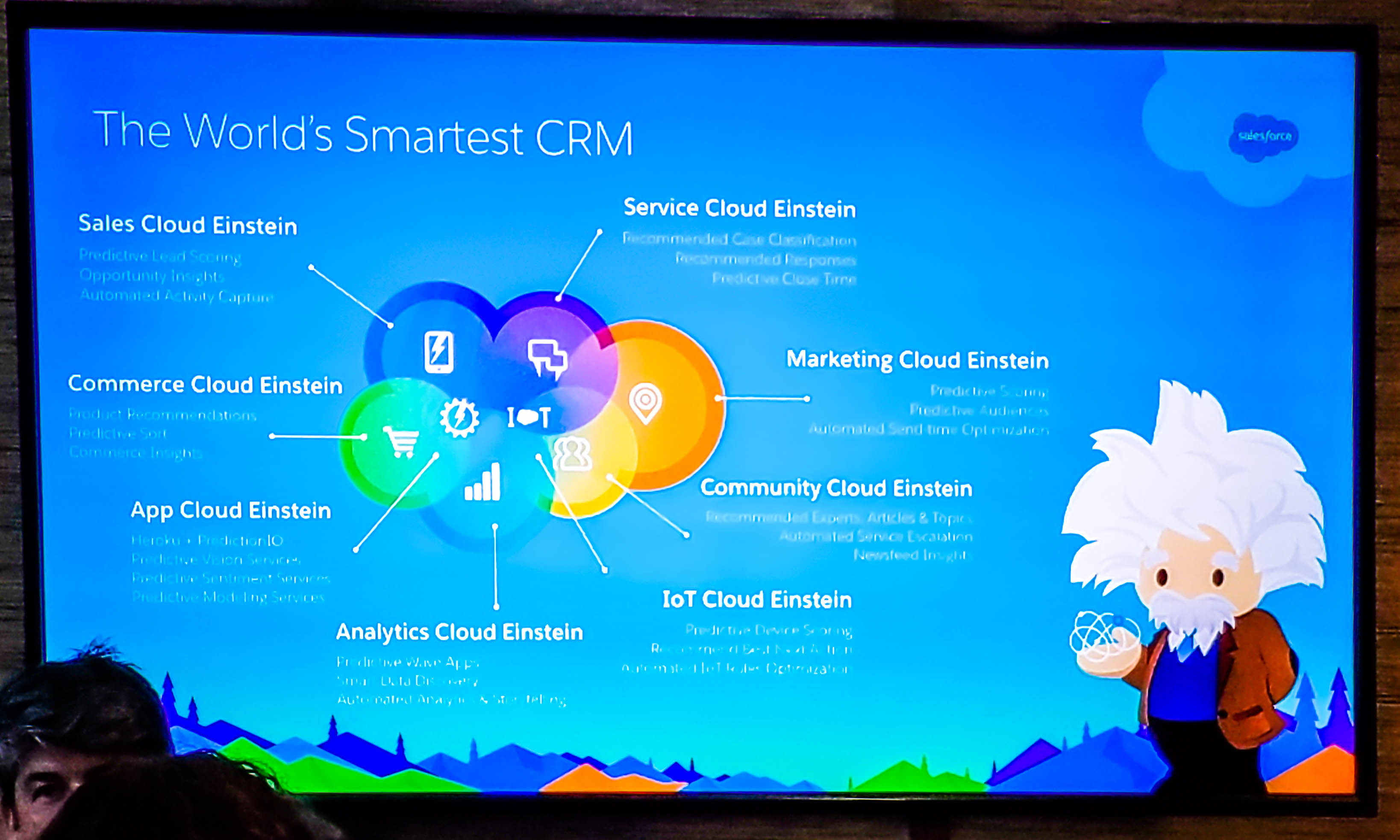

Introducing Salesforce Einstein–AI for Everyone

Artificial Intelligence Uses Big Data for 1:1 consumer targeting/Privacy & Consumer Protection?

Apple’s Siri analyzes thousands of movie showings and surfaces recommendations for the best times and theaters based on my location within seconds. Spotify knows my music preferences and curates personalized playlists for me. Facebook instantly recognizes my friends in photos and suggests tags with nearly 98 percent accuracy (link is external). All of this is made possible by artificial intelligence (AI)–complex and highly technical solutions such as natural language processing, deep learning and machine learning that when applied to everyday actions in our personal lives make us smarter and more productive. Today, Salesforce is delivering Salesforce Einstein–artificial intelligence for everyone. For many companies, the technical expertise, infrastructure and other resources required to deliver AI solutions is too significant to leverage in their enterprise applications. But in keeping with Albert Einstein's dictum that the definition of genius is taking the complex and making it simple, Salesforce Einstein is removing the complexity of AI, enabling any company to deliver smarter, personalized and more predictive customer experiences. Salesforce Einstein is a set of best-in-class platform services that bring advanced AI capabilities into the core of the Customer Success Platform, making Salesforce the world’s smartest CRM. Powered by advanced machine learning, deep learning, predictive analytics, natural language processing and smart data discovery, Einstein’s models will be automatically customized for every single customer, and it will learn, self-tune, and get smarter with every interaction and additional piece of data. Most importantly, Einstein’s intelligence will be embedded within the context of business, automatically discovering relevant insights, predicting future behavior, proactively recommending best next actions and even automating tasks. With Einstein, the world’s #1 CRM is now the world’s smartest CRM and we’re bringing intelligence to all of our clouds. But don’t take my word for it. See below to hear from our Cloud GMs about what Einstein (link is external)means for all our clouds. Sales Cloud Einstein (link is external) Service Cloud Einstein (link is external) Marketing and Analytics Cloud Einstein (link is external) Community Cloud Einstein (link is external) IoT Cloud Einstein (link is external) App Cloud Einstein (link is external) We couldn’t be more excited to finally unveil Salesforce Einstein after two years of hard work and targeted acquisitions. As we continue to build out AI for CRM, we are committed to understanding the next generation of AI technology and how it can best be applied to Salesforce. This effort will be led by Salesforce Research, a new research group focused on the future of AI, under the leadership of Dr. Richard Socher, our Chief Scientist. -

Blog

DoJ and FCC Regulators Must Scrutinize Verizon/Yahoo deal

Need 21st Century Safeguards for Big-Data-driven digital media mergers

Statement of Jeff Chester, CDD executive director: Regulators, including the DOJ and FCC, must prevent Verizon from taking anticompetitive and unfair advantage of its broadband ISP bird’s nest view of what their subscribers and consumers do—online and off. The proposed takeover of Yahoo’s core digital data advertising business, when combined with the capability to gather information from its wireless devices, broadband networks, and set-top boxes, gives it control over the key screens that Americans use today. Verizon has already supercharged its use of “Big Data” tactics to monitor their customers and online users, including through its recent shopping spree that includes AOL and Millennial Media (and also enables it to manage part of Microsoft Advertising’s consumer data targeting operations). Verizon’s ability to track a single person across their devices, when they are in a store, at home, work or school, and connect the digital dots to know whether they are now on their mobile phone or home watching TV, is a threat to the privacy of Americans. Regulators should closely scrutinize the Yahoo dea to prevent anticompetitive practices related to Verizon’s ability to leverage its mobile and geo-location consumer data. The FCC should also impose strict safeguards that prevent Verizon from combining Yahoo data with what it already knows about its customers and consumers. The FCC should also quickly enact its proposed consumer privacy rules for broadband ISPs. As broadband network monopolies such as Verizon merge online ad giants, new threats to consumer privacy emerge. That’s why action of FCC Chairman Wheeler’s privacy proposal is required. The Obama Administration and the FCC must ensure that deals like Verizon/Yahoo don’t further erode the little privacy Americans enjoy today when they use digital media. **** For background on Verizon’s use of data, see the section in our recent report: https://www.democraticmedia.org/article/big-data-watching-growing-digita... also, to see Verizon’s recent growing capabilities to use our geo-location and app data, note from Millennial Media 10 k 2015 (that company now owned by Verizon) My bold: Our robust data management platform, or DMP, allows us to access, analyze and utilize the large volumes of data we possess. This data includes location, social, interest, and contextual data, as well as the insights we derive from measuring campaign effectiveness—providing a unique, multidimensional profile of individual consumers. To date, we have developed more than 700 million active server‑side unique user profiles, over 60 million of which link multiple mobile devices and PCs to a single specific user on an anonymous basis. These user profiles, combined with third party data from our data partners, enable us to deliver more relevant, engaging and effective advertising to our advertising clients. Our data asset also allows us to measure the impact of mobile advertising on consumer engagement, intent and action. We have developed a suite of solutions which measures several different areas of mobile advertising impact. As of December 31, 2014, our platform reached more than 650 million monthly unique users worldwide, including over 175 million monthly unique users in the United States alone. Approximately 60,000 apps and mobile sites are enabled by their developers to receive ads delivered through our platform, and we can deliver ads on over 9,000 different mobile device types and models. While averaging more than three billion ad requests daily throughout 2014, in the last two months of 2014, our platform typically handled over nine billion ad requests daily, including requests received through our supply side tool, and requests received through third party platforms and processed by our programmatic buying tool. -

News

EU data protection rights at risk through trade agreements, new study shows

Strong Safeguards on Privacy and digital-consumer Protection Required

Researchers of the University of Amsterdam’s Institute for Information Law (IViR) have published an independent study today commissioned by BEUC (link is external), EDRi (link is external), CDD and TACD (link is external). The study shows that the European Union (EU) does not sufficiently safeguard citizens' personal data and privacy rights in its trade agreements. Modern digital markets rely on the processing of personal data, but regulations on how to protect these differ widely from country to country. A new generation of trade agreements increasingly allows unrestricted data transfers, including personal data, between countries. This ground-breaking studysheds light on how trade agreements – for example, the future EU-US trade deal (TTIP) –treat personal data and privacy. By looking at both EU and international law, the researchers conclude that the EU should protect its citizens’ personal data, and prevent their privacy from being weakened in trade agreements. To do so, the EU must take action to safeguard its rules on data protection from legal challenge by its trade partners. “It’s unacceptable that the EU’s privacy and data protection rules could be challenged through trade policy. Trade deals should not undermine consumers’ fundamental rights and their very trust in the online economy. We’re pleased to see this study clearly echoing the European Parliament’s call to keep rules on privacy and data protection out of trade agreements,” Monique Goyens, Director General of The European Consumer Organisation (BEUC), commented. "The EU has the responsibility to safeguard people's rights to privacy and data protection in trade agreements. The European Union has done a great job at setting high standards for these fundamental rights. This study shows how to ensure these high standards can be maintained when trade agreements are negotiated", said Joe McNamee, Executive Director of European Digital Rights (EDRi). "The United States is aggressively pushing for a trade deal with the EU that would permit the unprecedented expansion of commercial data collection, threatening both consumers and citizens. America’s data giants want the TTIP to serve as a digital `Trojan Horse’ that effectively sidesteps the EU’s human-rights-based data protection safeguards. This new study is a wake-up call for policy makers and the public: any trade deal must first protect our privacy and ensure consumer protection," added Jeffrey Chester, Executive Director of Center for Digital Democracy (CDD). "The EU’s opaque and inconsistent system of granting third countries so-called ‘adequacy’ status for transferring personal data of its citizens makes it vulnerable to legal challenge by trade partners. This is an important finding of this study, and particularly relevant in the week when the EU-US much-criticised Privacy Shield, is likely to be approved. The EU must not make some partners more equal than others when deciding on the adequacy of their data protection laws", said Anna Fielder, Senior Policy Advisor of the Transatlantic Consumer Dialogue (TACD). Note to editors: BEUC, the European Consumer Organisation, acts as the umbrella group in Brussels for its 42 national member organisations. Its main task is to represent these members at the European level and defend the interests of all Europe’s consumers. BEUC has a special focus on five areas identified as priorities by its members: Financial Services, Food, Digital Rights, Consumer Rights & Enforcement and Sustainability. European Digital Rights (EDRi), is an umbrella organisation of 31 civil and human rights organisations from across Europe. Our mission is to promote, protect and uphold civil and human rights in the digital environment. The Center for Digital Democracy (CDD), a U.S.-based NGO, works to protect the privacy and welfare of the public in the “Big Data” digitally driven marketplace. By combining advocacy, industry research, coalition- building, and media outreach, CDD helps hold accountable some of the most powerful forces shaping the destiny of the world—especially those companies that dominant the global Internet landscape. The Transatlantic Consumer Dialogue (TACD), is a forum of over 70 EU and US consumer organisations established in 1998 with the goal of promoting the consumer interest in the US and EU policy making. -

We the undersigned privacy scholars support the proposal of the Federal Communications Commission to apply and adapt the Communications Act’s Title II consumer protection provisions to broadband internet access services. We commend the Commission’s much-needed efforts to carry out its statutory obligation and to protect the privacy of broadband internet access customers. We agree with the Commission’s proposal and affirm the importance of giving consumers effective notice and control over their personal information by strengthening consumer choice, transparency and data security. In particular, we support the Commission’s proposal to require affirmative consent (opt-in) for use and sharing of customer data for purposes unrelated to providing communications services. As scholars who have studied, researched, taught, and thought about privacy in depth from a variety of perspectives, we believe it is important that Americans have their privacy protected as they access, use and reap the benefits of the internet, the most fundamental communications network of our times. Privacy is a core human need, and citizens should be able to access the internet without the fear of being watched or of having their data analyzed or shared in unexpected ways. Privacy protections are a vital part of life for free citizens in a democratic society, and make society as a whole more vibrant, equitable and just. There are many ways our privacy is under assault in our age of fast-moving technology, which makes it all the more important that we protect that privacy in our bedrock communications system. We welcome innovation and technological progress but do not believe in the necessity to advance them at the expense of privacy. Our fundamental right to privacy should not be sold off for short-term gains, and thus we urge the Commission to adopt its proposed rule, which would significantly advance privacy online. [see signatories in attachment]

-

Blog

Consumer, Privacy Groups decry failure to protect consumer facial and biometric privacy by Commerce Department and industry lobbyists

Statement on NTIA Privacy Best Practice Recommendations for Commercial Facial Recognition Use

Alvaro Bedoya, Center for Digital Democracy, Common Sense Kids Action, Consumer Action, Consumer Federation of America, Consumer Watchdog, Privacy Rights Clearinghouse, and U.S. PIRG The “Privacy Best Practice Recommendations for Commercial Facial Recognition Use” that have finally emerged from the multistakeholder process convened by the National Telecommunications and Information Administration (NTIA) are not worthy of being described as “best practices.” In fact, they reaffirm the decision by consumer and privacy advocates to withdraw from the proceedings. In aiming to provide a “flexible and evolving approach to the use of facial recognition technology” they provide scant guidance for businesses and no real protection for individuals, and make a mockery of the Fair Information Practice Principles on which they claim to be grounded. That is not surprising. It was clear to those of us who participated in this process that it was dominated by commercial interests and that we could not reach consensus on even the most fundamental question of whether individuals should be asked for consent for their images to be collected and used for purposes of facial recognition. Under these “best practices,” consumers have no say. Instead, those who follow these recommendations are merely “encouraged” to “consider” issues such as voluntary or involuntary enrollment, whether the facial template data could be used to determine a person’s eligibility for things such as employment, healthcare, credit, housing or employment, the risks and harms that the process may impose on enrollees, and consumers’ reasonable expectations. No suggestions are provided, however, for how to evaluate and deal with those issues. If entities use facial recognition technology to identify individuals, they are “encouraged” to provide those individuals the opportunity to control the sharing of their facial template data – but only for sharing with unaffiliated third parties that don’t already have the data, and “control” is not defined. Just as there is nothing that “encourages,” let alone requires, asking individuals for consent for their images to be collected and used for facial recognition in the first place, there is nothing that “encourages” offering them the ability to review, correct or delete their facial template data later. The recommendations merely “encourage” entities to disclose to individuals that they have that ability, if in fact they do. Further, if facial recognition is being used to target specific marketing to, for example, groups of young children, there is no “encouragement” to follow even these weak principles. There is much more lacking in these “best practices,” but there is one good thing: this document helps to make the case for why we need to enact laws and regulations to protect our privacy. If this is the “best” that businesses can do to address the privacy implications of collecting and using one of the most intimate types of individuals’ personal data – their facial images – it falls so short that it cannot be taken seriously and it demonstrates the ineffectiveness of the NTIA multistakeholder process. -

Blog

AT&T: See databrokers they use in attached doc. "Best Practices"

How to optimize results on this groundbreaking platform

Addressable TV was launched in 2012 by DIRECTV. Multi Video Program Distributors (MVPDs), such as DIRECTV, are currently the only entities offering true Addressable TV due to required access of both the video distribution system and data center. MVPDs offer Addressable TV in the ad breaks they receive from program networks, such as ESPN and CNN, as part of their carriage agreements. Currently, four MVPDs (DIRECTV, DISH, Comcast, and Cablevision) offer Addressable TV and that footprint is set to grow to 40 million households by end of year1. With AT&T’s acquisition of DIRECTV in 2015, AT&T AdWorks now has the largest national addressable platform, offering Addressable TV advertising across nearly 13 million DIRECTV households out of the 26 million combined DIRECTV and U-verse TV households. In a recent study conducted by Adweek and AT&T AdWorks, a survey of leading marketers indicated that current TV buying (without addressability) isn’t meeting marketing needs and there is both frustration and a desire to reach relevant audiences more effectively. Nearly all respondents agree that there is too much waste associated with TV and that traditional methods of measurement are outdated. As a result, over 80% are shifting TV dollars into digital for greater accountability and effectiveness. However, nearly all agree that TV would be more attractive if they “could target more finely.” As the leader in Addressable TV, AT&T AdWorks has run hundreds of campaigns across a wide array of advertisers and verticals. The purpose of this white paper is to share the learnings from those experiences to inform future campaigns and advertisers – and to demonstrate that TV still remains the most impactful advertising medium made even more effective by addressability. --- For more information, see the AT&T White Paper PDF. -

Blog

Katharina Kopp Joins CDD as Deputy Director and Director of Policy

Leading Privacy Advocate Will Direct CDD’s Work on Big Data and the Public Interest

Katharina Kopp, Ph.D., will join the Center for Digital Democracy (CDD) on 6 June 2016 as its deputy director and director of policy. Dr. Kopp comes to CDD with decades of experience as an advocate, scholar, policy analyst, privacy expert, and corporate leader. She will develop and oversee a range of new initiatives at CDD, expanding the scope of its work on the role and impact of “Big Data” in contemporary society. Dr. Kopp will focus particularly on developing new policies to advance individual autonomy and consumer protections, as well as social justice, equity, and human rights. She will also play a leadership role in the organization’s ongoing constituency-building and grassroots efforts. Dr. Kopp worked with the Center for Media Education during the 1990’s and served as a key policy advocate during the passage and implementation of the Children’s Online Privacy Protection Act (COPPA). In addition to her work with the Aspen Institute, the Benton Foundation, and the Health Privacy Project, Dr. Kopp served as vice president at American Express, leading its global privacy risk management program. Most recently she was the director of the Privacy and Data Project at the Center for Democracy and Technology. “We are privileged to have Katharina Kopp join CDD,” said Jeff Chester, its executive director. “Her unique leadership qualities and rich background will shape the organization’s agenda in this important new phase of our work. This includes forging partnerships with global NGOs, research institutions, and community-based organizations.” Dr. Kopp, deputy director, added: “I look forward to exploring the effects of technology and discriminatory data practices on democracy and social justice, particularly the effects on individual autonomy and increasing inequality. How to respond to these trends with appropriate public policy will be core to my work. Furthermore, I want to see CDD engaged in shaping the public’s understanding of these processes and to frame the solutions not in individualistic but collective and systemic terms. I believe that this will be key to the success of any policy proposals.” The Center for Digital Democracy is a leading nonprofit organization focused on empowering and protecting the rights of the public in the digital era. Since its founding in 2001 (and prior to that through its predecessor organization, the Center for Media Education), CDD has been at the forefront of research, public education, and advocacy protecting consumers in the digital age. -

Blog

Today’s FCC Action Will Force Open the “Black Box” of ISP Data Gathering Practices that Threaten Consumer Privacy

Vote Shows lack of understanding on limited role of FTC

Phone and cable ISPs pose a major threat to the privacy of their subscribers and consumers. They have a growing arsenal of “Big Data” capabilities that eavesdrop on their customers—including families. Internet Service Providers are gathering data on what we do and where we go, using sophisticated algorithms and predictive analytics to sell our information to marketers. As CDD documented in a report released last week, ISPs have been on a data buying and partnering shopping spree so they can build in-depth digital profiles of their customers (such as Verizon (link is external)/AOL/Millennial Media and Comcast/ (link is external)Visible World). Consumers should have the right to make decisions on how their information can be collected, shared or sold. With a set of FCC safeguards, Americans will have some of their privacy restored. We look forward placing on the record all the ways those ISPs now—and will—threaten the privacy of Americans. Several commissioners appear uninformed about the ability of the FTC to protect consumer privacy. The FTC does not have the regulatory authority to ensure privacy of Americans is protected (except in rare cases, such as the Children’s privacy law we helped develop). The FTC’s framework has failed to do anything to check the massive collection of our data that everyone online confronts. It’s the role of the FCC to ensure that broadband networks operate in the public interest, including protecting consumers. Today’s vote reaffirms that the FCC takes its mission to do so seriously. -

Blog

Commerce Department Continues to Show Disregard for Consumer Privacy by “convening” industry dominated group on facial recognition without participation of consumer and privacy civil society groups

Lobbyists craft purposefully vague proposals without any real safeguards for biometric data

Americans face new privacy threats from the use of their facial and other biometric information, as personal details of our physical selves are captured, analyzed and used for commercial purposes. Facial recognition (link is external)technologies are part of the ever-growing (link is external)data collection and profiling (link is external) being conduced daily on Americans today—whether we are online and offline. Companies want to be able to use the power (link is external) of facial recognition to make (link is external) decisions about us—including how we are to be treated in stores and on websites. Consumer groups have called on industry to support pro-consumer and pro-rights policies that would ensure an individual can decide whether facial and other personal physical information can be collected in the first place. Last June, however, the industry dominated process led by the Department of Commerce refused to support respecting a persons’ right to control how their biometric data can be gathered and used. As a result, consumer and privacy groups withdrew (link is external) from the Commerce Department “stakeholder” convening on facial recognition. These meetings—primarily dominated by industry lobbyists—are part of a White House initiated effort to design “codes of conduct” to ensure American’s have greater privacy rights. But instead of trying to address the concerns of the consumer and privacy community about meaningful safeguards for facial recognition when used for commercial purposes, the Commerce Department merely continued the process without their participation. For the Commerce Department, its priority is to help grow the consumer data profiling industry—regardless of whether Americans face a serious threat to their privacy and the consequences of potential discriminatory and unfair practices. Today, the Commerce Department is considering industry proposals (link is external) on facial recognition that fail to ensure the American public is protected by the growing use of facial data collection for commercial use. The drafts allow unlimited use of our most personal data without effective safeguards. Instead of ensuring basic rights—such as giving people the right to make informed decisions prior to the collection of their facial data—the industry proposes a scheme that would allow it to harvest our faces, skin color, age, race/ethnicity and more without any limit. By allowing such a clearly inadequate and self-serving industry proposal to be considered at all, the Department of Commerce (and its NTIA division) demonstrates it cannot be trusted to protect consumers. It is putting the commercial interests of the data industry ahead of its responsibilities to the American public. The process and the proposals are not reflective of America today. We cannot believe that President Obama (link is external) endorses how his Commerce Department has transformed the idea of a “Consumer Privacy Bill of Rights” into one that really gives carte blanche to the unfettered use of our faces and other highly personal biometric information.